(…to storage transfer speeds…)

I was recently asked what could be done to maximize storage transfer speeds in physical and virtual Windows servers. Not the “sexiest” topic for a blog post, I know, but it should be interesting reading for any SysAdmin who wants to get the most performance from their IT environment, or for those IT Administrators who suffer from user or customer complaints about system performance.

As it happens, I had just completed some testing on this very subject and thought it would be helpful to share the results publicly in this article.

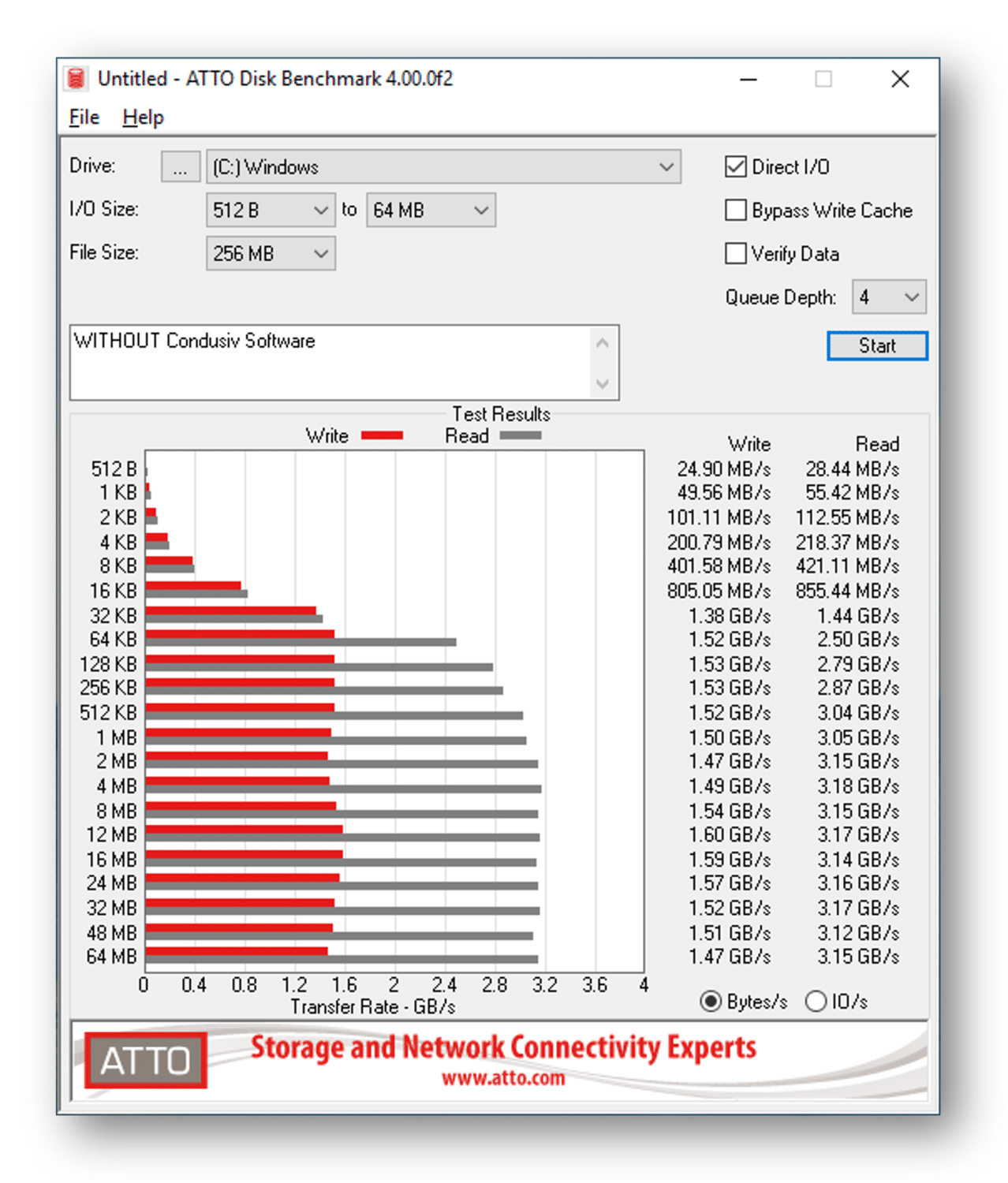

The crux of the matter comes down to storage I/O size and its effect on data transfer speeds. You can see in this set of results using an NVME-connected SSD (Samsung MZVKW1T0HMLH Model SM961), that the read and write transfer speeds, or put another way, how much data can be transferred each second is MUCH less when the storage I/O sizes are below 64 KB in size:

You can see that whilst the transfer rate maxes out at around 1.5 GB per second for writes and around 3.2 GB per second for reads, when the storage I/O sizes are smaller, you don’t see disk transfer speeds at anywhere near that maximum rate. And that’s okay if you’re only saving 4 KB or 8 KB of data, but is definitely NOT okay if you are trying to write a larger amount of data, say 128 KB or a couple of megabytes, and the Windows OS is breaking that down into smaller I/O packets in the background and transferring to and from disk at those much slower transfer rates. This happens way too often and means that the Windows OS is dampening efficiency and transferring your data at a much slower transfer rate than it could, or it should. That can have a very negative impact on the performance of your most important applications, and yes, they are probably the ones that users are accessing the most and are most likely to complain about.

The good news of course, is that the V-locity® software from Condusiv® Technologies is designed to prevent these split I/O situations in Windows virtual machines, and Diskeeper® will do the same for physical Windows systems. Installing Condusiv’s software is a quick, easy and effective fix as there is no disruption, no code changes required and no reboots. Just install our software and you are done!

The good news of course, is that the V-locity® software from Condusiv® Technologies is designed to prevent these split I/O situations in Windows virtual machines, and Diskeeper® will do the same for physical Windows systems. Installing Condusiv’s software is a quick, easy and effective fix as there is no disruption, no code changes required and no reboots. Just install our software and you are done!

You can even run this test for yourself on your own machine. Download a free copy of ATTO Disk Benchmark from The Web and install it. You can then click its Start button to quickly get a benchmark of how quickly YOUR system processes data transfer speeds at different sizes. I bet you quickly see that when it comes to data transfer speeds, size really does matter!

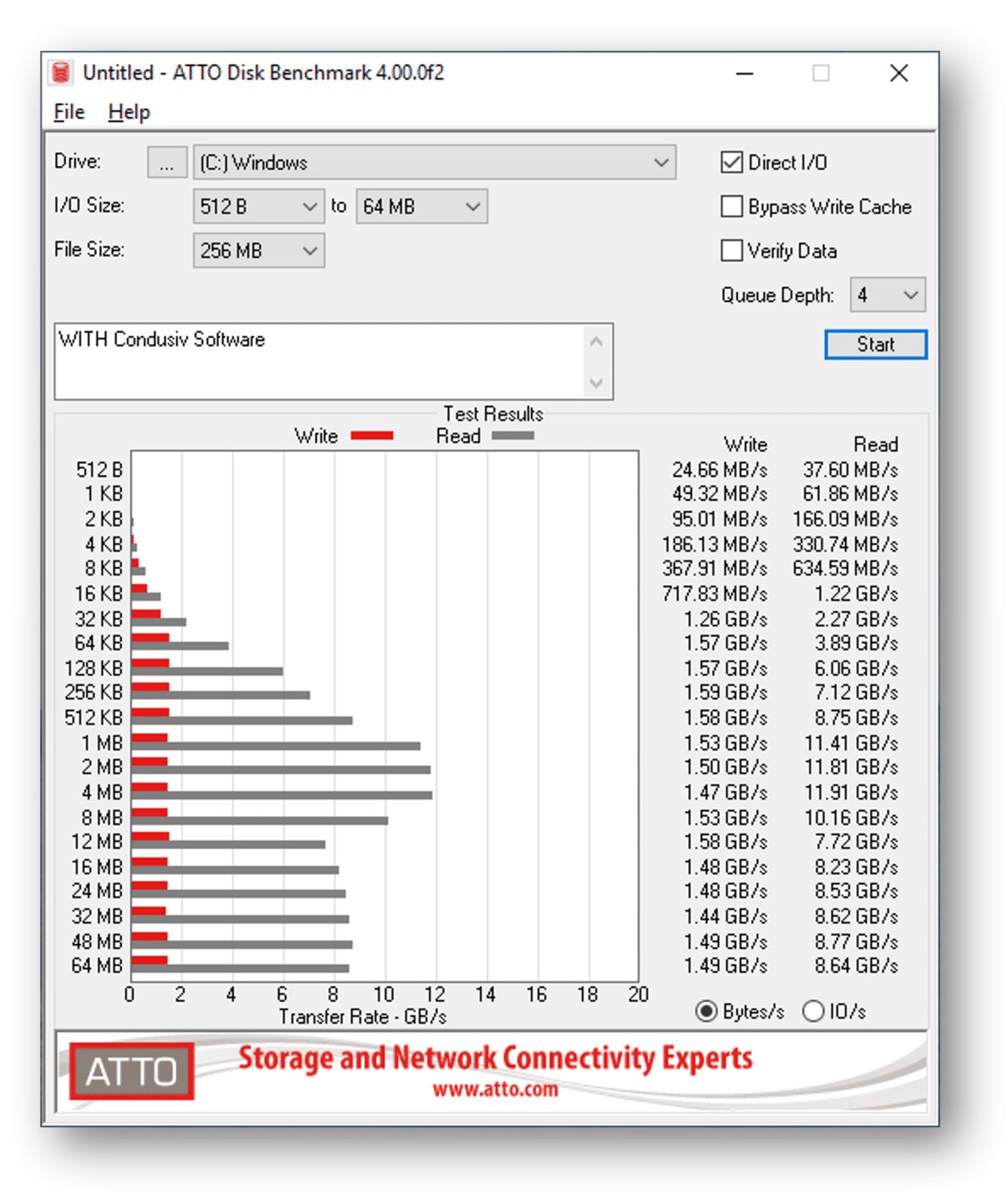

Out of interest, I enabled our Diskeeper software (I could have used V-locity instead) so that our RAM caching would assist the speed of the read I/O traffic, and the results were pretty amazing. Instead of the reads maxing out at around 3.2 GB per second, they were now maxing out at around a whopping 11 GB per second, more than three times faster. In fact, the ATTO Disk Benchmark software had to change the graph scale for the transfer rate (X-axis) from 4 GB/s to 20 GB/s, just to accommodate the extra GBs per second when the RAM cache was in play. Pretty cool, eh?

Of course, it is unrealistic to expect our software’s RAM cache to satisfy ALL of the read I/O traffic in a real live environment as with this lab test, but even if you satisfied only 25% of the reads from RAM in this manner, it certainly wouldn’t hurt performance!!!

If you want to see this for yourself on one of your computers, download the ATTO Disk Benchmark tool from The Web, if you haven’t already, and as mentioned before, run it to get a benchmark for your machine. Then download and install a free trial copy of Diskeeper for physical clients or servers, or V-locity for virtual machines from condusiv.com/try and run the ATTO Disk Benchmark tool several times. It will probably take a few runs of the test, but you should easily see the point at which the telemetry in Condusiv’s software identifies the correct data to satisfy from the RAM cache, as the read transfer rates will increase dramatically. They are no longer being confined to the speed of your disk storage, but instead are now happening at the speed of RAM. Much faster, even if that disk storage IS an NVME-connected SSD. And yes, if you’re wondering, this does work with SAN storage and all levels of RAID too!

NOTE: Before testing, make sure you have enough “unused” RAM to cache with. A minimum of 4 GB to 6 GB of Available Physical Memory is perfect.

Whether you have spinning hard drives or SSDs in your storage array, the boost in read data transfer rates can make a real difference. Whatever storage you have serving YOUR Windows computers, it just doesn’t make sense to allow the Windows operating system to continue transferring data at a slower speed than it should. Now with easy to install, “Set It and Forget It®” software from Condusiv Technologies, you can be sure that you’re getting all of the speed and performance you paid for when you purchased your equipment, through larger, more sequential storage I/O and the benefit of intelligent RAM caching.

If you’re still not sure, run the tests for yourself and see.

Size DOES matter!

Leave A Comment

You must be logged in to post a comment.