In over 30 years in the IT business, I can count on one hand the number of times I’ve heard an IT manager say, “The budget is not a problem. Cost is no object.”

It is as true today as it was 30 years ago. That is, increasing pressure on the IT infrastructure, rising data loads and demands for improved performance are pitted against tight budgets. Frankly, I’d say it’s gotten worse – it’s kind of a good news/bad news story.

The good news is there is far more appreciation of the importance of IT management and operations than ever before. CIOs now report to the CEO in many organizations; IT and automation have become an integral part of business; and of course, everyone is a heavy tech user on the job and in private life as well.

The bad news is the demand for end-user performance has skyrocketed; the amount of data processed has exploded; and the growing number of uses (read: applications) of data is like a rising tide threatening to swamp even the most well-staffed and richly financed IT organizations.

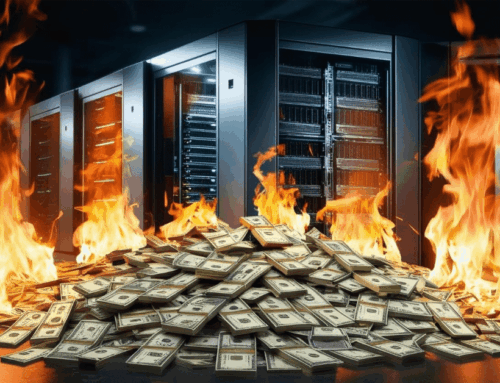

The balance between keeping IT operations up and continuously serving the end-user community while keeping costs manageable is quite a trick these days. Capital expenditures on new hardware and infrastructure and Operational expenditures on personnel, subscriptions, cloud-based service or managed service providers can become a real dilemma for IT management.

An IT executive must be attuned to changes in technology, changes in his/her own business and the changing nature of the existing infrastructure as the manager tries to extend the maximum life of equipment.

Performance demands keep IT professionals awake at night. The hard truth is the dreaded 2:00 a.m. call regarding a crashed server or network operation, or the halt of operations during a critical business period (think end of year closing, peak sales season, or inventory cycle) reveals that in many IT organizations, they’re holding on by the skin of their teeth.

Condusiv has been in the business of improving the performance of Windows systems for 30 years. We’ve seen it all. One of the biggest mistakes an IT decision-maker can make is to go along with the “common wisdom” (primarily pushed by hardware manufacturers) that the only way to improve system and application performance is to buy new hardware. Certainly, at some point hardware upgrades are necessary, but the fact is, some 30-40% of performance is being robbed by small, fractured, random I/O being generated due to the Windows operating system (that is, any Windows operating system, including Windows 10 or Windows Server 2019. Also see earlier article Windows is Still Windows). Don’t get me wrong, Windows is an amazing solution used by some 80% of all systems on the planet. But as the storage layer has been logically separated from the compute layer and more systems are being virtualized, Windows handles I/O logically rather than physically which means it breaks down reads and writes to their lowest common denominator, creating tiny, fractured, random I/O that creates a “noisy” environment. Add a growing number of virtualized systems into the mix and you really create overhead (you may have even heard of the “I/O blender effect”). The bottom line: much of performance degradation is a software problem that can be solved by software. So, rather than buying a “forklift upgrade” of new hardware, our customers are offloading 30-50% or more of their I/O which dramatically improves performance. By simply adding our patented software, our customers avoid the disruption of migrating to new systems, rip and replacement, end-user training and the rest of that challenge.

Yes, the above paragraph could be considered a pitch for our software, but the fact is, we’ve sold over 100 million copies of our products to help IT professionals get some sleep at night. We’re the world leader in I/O reduction. We improve system performance an average of 30-50% or more (often far more). Our products are non-disruptive to the point that we even trademarked the term “Set It and Forget It®”. We’re proud of that, and the help we’re providing to the IT community.

To try for yourself, download a free, 30-day trial version (no reboot required) at condusiv.com/try

I've been a grateful and appreciative user of Diskeeper for over two decades. Just as the Microsoft Corp needs to work at breathtaking speed to develop, update, and secure their Windows operating system. It has been reassuring to learn that Condusiv Technologies is able to provide new versions of this marvelous utility that can match the rapid development in both hardware and software.