This is a blog to complement a video where I demonstrated how to use the intelligent RAM caching technology found in the Condusiv’s software to improve the performance that a computer can get from NVMe flash storage. You can view this video here:

A question arose from a couple of long-term customers about whether the use of the Condusiv software was still relevant if they started utilizing very fast, flash storage solutions. This was a fair question!

The Condusiv software is designed to reduce the amount of unnecessary storage I/O traffic that has to go out and be processed by the underlying disk storage layer. It not only reduces the amount of I/O traffic, but it optimizes that which DOES have to go out to disk and it further reduces the workload on the storage layer by employing a very intelligent RAM caching strategy.

So, given that flash storage, whilst not only becoming more prevalent in today’s compute environments, can process storage I/O traffic VERY fast when compared to its spinning disk counterparts, and is capable of processing more I/Os per Second (IOPS) than ever before, the very sensible question was this:

“Can the use of Condusiv’s software provide a significant performance increase when using very fast flash storage?”

As I was fortunate to have recently implemented some flash storage in my workstation, I was keen to run an experiment to find out.

SPOILER ALERT: For those of you who just want to have the question answered, the answer is a resounding Y ES!

ES!

The test showed beyond doubt that with Condusiv’s software installed, your Windows computer can process significantly more I/Os per Second, process a much higher throughput of data, and allow the storage I/O heavy workloads running in computers the opportunity to get significantly more work done in the same amount of time – even when using very fast flash storage.

For those of you true ‘techies’ that are as geeky as me, read on, and I will detail the testing methodology and results in more detail.

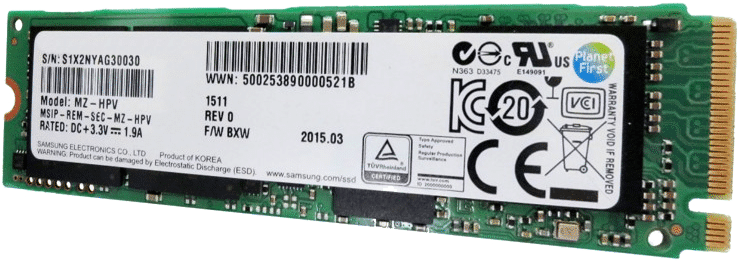

The storage that I now had in my workstation (and am still happily using!) was a 1 terabyte SM961 POLARIS M.2-2280 PCI-E 3.0 X 4 NVMe solid state drive (SSD).

Is it as fast as it’s made out to be? Well, in this engineer’s opinion – OMG YES!

It makes one hell of a difference when compared to spinning disk drives. This is in part because it’s connected to the computer via a PCI Express (PCIe) bus, as opposed to a SATA bus. The bus is what you connect your disk to in the computer, and different types of buses have different capabilities, such as the speed at which data can be transferred. SATA-connected disks are significantly slower than today’s PCIe-connected storage using an NVMe device interface. There is a great Wiki about this if you want to read more https://en.wikipedia.org/wiki/NVM_Express

To give you an idea of the improvement though, consider that the Advanced Host Controller Interface (AHCI) that is used with the SATA connected disks has one command queue, in which it can process 32 commands. That’s up to 32 storage requests at a time, and that was okay for spinning disk technology because the disks themselves could only cope with a certain number of storage requests at a time.

NVMe on the other hand doesn’t have one command queue, it has 65,535 queues. AND, each of those command queues can themselves accommodate 65,536 commands. That’s a lot more storage requests that can be processed at the same time! This is really important because flash storage is capable of processing MANY more storage requests in parallel than its spinning disk cousins. Quite simply NVMe was needed to really make the most of what flash disk hardware can do. You wouldn’t put a kitchen tap (faucet) on the end of a fire hose and expect the same amount of water to flow through it, right? Same principle!

As you can probably tell, I’m quite excited by this boost in storage performance. (I’m strange like that!) And, I know I’m getting a little off-topic (apologies), so back to the point!

I had this SUPER-FAST storage solution and needed to prove one way or another if Condusiv’s software could increase the ability of my computer to process even more workload.

Would my computer be able to process more storage I/Os per Second?

Would my computer be able to process a larger amount of storage I/O traffic (megabytes) every second?

Testing Methodology

To answer these questions, I took a virtual machine and cloned it so that I had two virtual machines that were as identical as I could make them. I then installed Condusiv’s software on both and disabled Condusiv on one of the machines, so that it would process storage I/O traffic, just as if Condusiv wasn’t installed.

To generate a storage I/O traffic workload, I turned to my old friend IOMETER. For those of you who might not know IOMETER, this is a software utility originally designed by Intel but is now open source and available at SourceForge.net. It is designed as an I/O subsystem measurement tool and is great for generating I/O workloads of different types (very customizable!), and measure how quickly that I/O workload can be processed. Great for testing networks or in this case, how fast you can process storage I/O traffic.

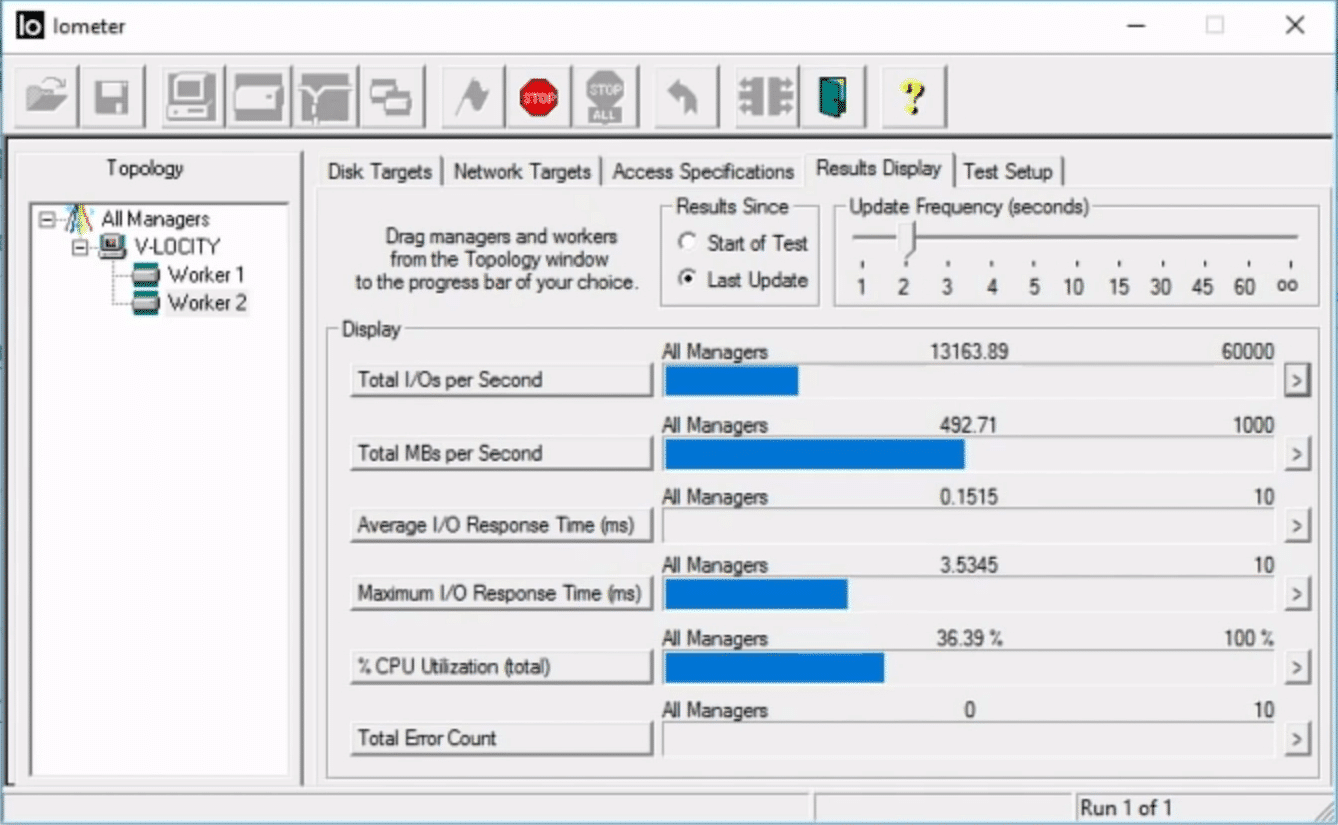

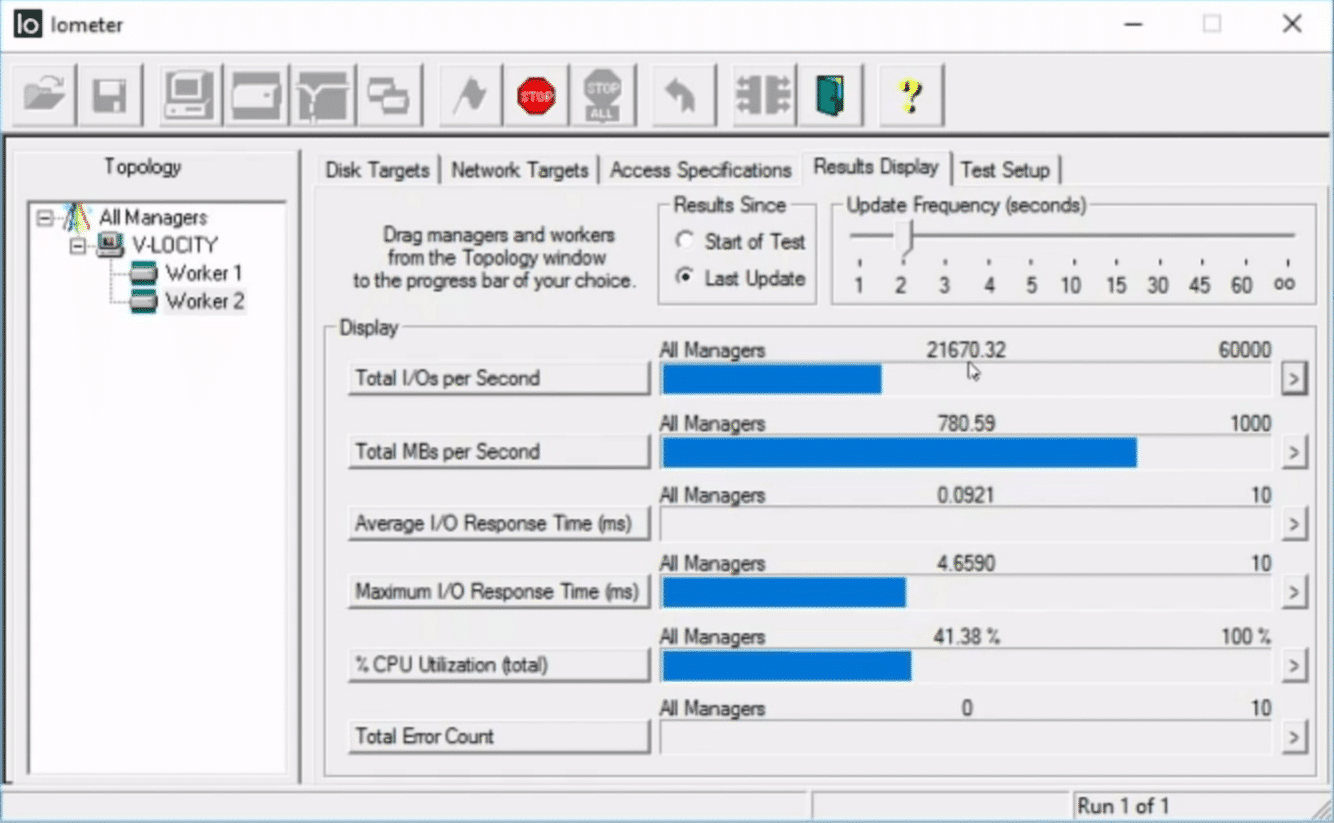

I configured IOMETER on both machines with the type of workload that one might find on a typical SQL database server. I KNOW, I know, there is no such thing as a ‘typical’ SQL database, but I wanted a storage I/O profile that was as meaningful as possible, rather than a workload that would just make Condusiv look good. Here is the actual IOMETER configuration:

Worker 1 – 16 kilobyte I/O requests, 100% random, 33% Write / 67% Read

Worker 2 – 64 kilobyte I/O requests, 100% random, 33% Write / 67% Read

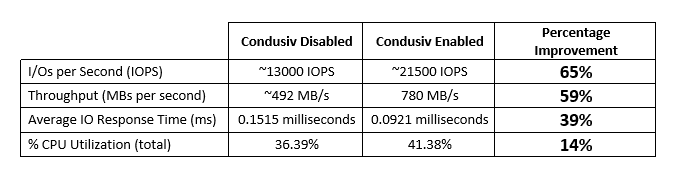

Test Results

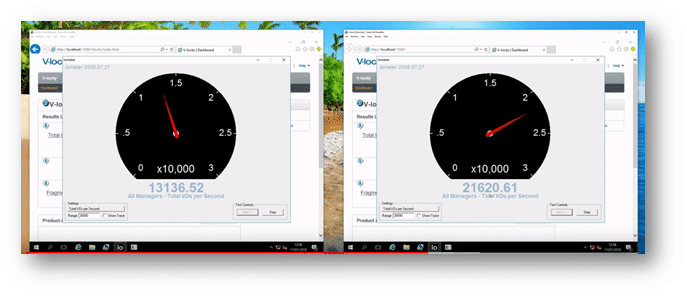

Condusiv’s Software Disabled Condusiv’s Software Enabled

Condusiv’s Software Disabled

Condusiv’s Software Enabled

Summary

Conclusion

In this lab test, the presence of Condusiv’s software reduced the average amount of time required to process storage I/O requests by around 65%, allowing a great amount of storage I/O requests to be processed per second and a greater amount of data to be transferred.

To prove beyond doubt that it was indeed Condusiv that caused the additional storage I/O traffic to be processed, I stopped the Condusiv service. This immediately ‘turned off’ all of the RAM caching and other optimization engines that Condusiv was providing, and the net result was that the IOPS and throughput dropped to normal as the underlying storage had to start processing ALL of the storage traffic that IOMETER was generating.

The true value to reducing storage I/O traffic

The more you can reduce storage I/O traffic that has to go out and be processed by your disk storage, the more storage I/O headroom you are handing back to your environment for use by additional workloads. It means that your current disk storage can now cope with:

- More computers sharing the storage. Great if you have a Storage Area Network (SAN) underpinning your virtualized environment, for example. More VMs running!

- More users accessing and manipulating the shared storage. The more users you have, the more storage I/O traffic is likely to be generated.

- Greater CPU utilization. CPU speeds and processing capacity keeps increasing. Now that the processing power is typically much more than typical needs, Condusiv can help your applications become more productive and use more of that processing power by not having to wait so much on the disk storage layer.

If you can achieve this without having to replace or upgrade your storage hardware, it not only increases the return on your current storage hardware investment but also might allow you to keep that storage running for a longer period of time (if you’re not on a fixed refresh cycle).

Sweat the storage asset!

(I hate that term, but you get the idea)

When you do finally need to replace your current storage, perhaps it won’t be as costly as you thought because you’re not having to OVER-PROVISION the storage as much, to cope with all of the excess, unnecessary storage traffic that Condusiv’s software can eliminate.

I typically see a storage traffic reduction of at least 25% at customer sites.

AND, I haven’t even mentioned the performance boost that many workloads receive from the RAM caching technology provided by Condusiv’s software. It is worth remembering that as fast as today’s flash storage solutions are, the RAM that you have in your computers is faster! The greater the percentage of read I/O traffic that you can satisfy from RAM instead of the storage layer, the better performing those storage I/O-hungry applications are likely to be.

Applications that benefit the most

In the real world, Condusiv is not a silver bullet for all types of workloads, and I wouldn’t insult your intelligence by saying that it was. If you have some workloads that don’t generate a great deal of storage I/O traffic, perhaps a DNS server, or DHCP server, well, Condusiv isn’t likely to make a huge difference. That’s my honest opinion as an IT Engineer.

Do you use any of these in your IT environment?

HOWEVER, if you are using storage I/O-hungry applications, then you really should give it a try.

Here are just some examples of the workloads that thousands of Condusiv customers are ‘performance-boosting’ with Condusiv’s I/O reduction and RAM caching technologies:

- Database solutions such as Microsoft SQL Server, Oracle, MySQL, SQL Express, and others.

- Virtualization solutions such as Microsoft Hyper-V and VMware.

- Enterprise Resource Planning (ERP) solutions like Epicor.

- Business Intelligence (BI) solutions like IBM Cognos.

- Finance and payroll solutions like SAGE Accounting.

- Electronic Health Records (EHR) solutions, such as MEDITECH

- Customer Relationship Management (CRM) solutions, such as Microsoft Dynamics.

- Learning Management Systems (LMS Solutions.

- Not to mention email servers like Microsoft Exchange AND busy file servers.

There are case studies our website for all of these workload types (and more).

Try it for yourself

You can experience the full power of Condusiv’s software for yourself, in YOUR Windows environment within a couple of minutes. Just download the fully-featured 30-day trialware here. You can check the dashboard reporting after a week or two and see just how much storage I/O traffic has been eliminated, and more importantly, how much storage time has been saved by doing so.

It really is that simple!

You don’t even need to reboot to make the software work. There is no disruption to live running workloads; you can just install and uninstall at will, and it only takes a minute or so.

You will typically start seeing results just minutes after installing.

I hope that this has been interesting and helpful. If you have any questions about the technologies within Condusiv’s software or have any questions about testing, feel free to contact us.

I hope that this has been interesting and helpful. If you have any questions about the technologies within Condusiv’s software or have any questions about testing, feel free to contact us.

I will be delighted to hear from you!

Originally published on: Sep 4, 2018. Updated Jul 14, 2020.

Thanks for your comment Rick Feuling, and you’re right! We’ve been able to help lots of customers with V-locity in Microsoft Hyper-V environments. I’m delighted that you see it as a small miracle too – that’s fantastic!

With regard to Diskeeper on your physical server, where you saw no performance improvements, it is probable that the workload being processed by your SQL server on that physical machine was not large enough (in terms of storage IO traffic) to really stress the SSD storage that underpinned it. If the average disk latency was quite low already, say below 5-10 milliseconds, it was likely fast enough already. Diskeeper’s RAM caching would have reduced that further, but because it was already fast enough, you probably didn’t perceive the difference.

Of course, if the workload increases, causing the disk IO queue lengths and latency to increase, it would certainly be worth trying Diskeeper again before going down the route of replacing the SSD disks with something faster and more expensive. Using software to get your peak performance back is usually a far cheaper option.

We don't have NVMe but we have SSD infrastructure in our Microsoft Hyper-V virtual environment and I can attest that this was also the case for us.

We also tried Diskeeper on a physical server (no virtualization) running SSD (SQL) and we saw NO performance improvements.

If you're running SQL in a virtualized environment V-locity is a small miracle.