In just 3 minutes, George Crump, Sr Analyst at Storage Switzerland, explains the real problem around fragmentation and SAN storage, debunks misconceptions, and describes what organizations are doing about it. It should be noted, that even though he is speaking about the Windows OS on physical servers, the problem is the same for virtual servers connected to SAN storage. Watch ->

In conversations we have with SAN storage administrators and even storage vendors, it usually takes some time for someone to realize that performance-robbing Windows fragmentation does occur, but the problem is not what you think. It has nothing to do with the physical layer under SAN management or latency from physical disk head movement.

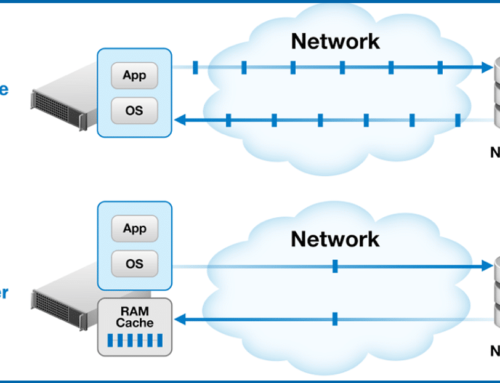

When people think of fragmentation, they typically think in the context of physical blocks on a mechanical disk. However, in a SAN environment, the Windows OS is abstracted from the physical layer. The Windows OS manages the logical disk software layer and the SAN manages how the data is physically written to disk or solid-state.

What this means is that the SAN device has no control or influence on how data is written to the logical disk. In the video, George Crump describes how fragmentation is inherent to the fabric of Windows and what actually happens when a file is written to the logical disk in a fragmented manner – I/Os become fractured and it takes more I/O than necessary to process any given file. As a result, SAN systems are overloaded with a small, fractured, random I/O, which dampens overall performance. The I/O overhead from a fragmented logical disk impacts SAN storage populated with flash equally as much as a system populated with disk.

The video doesn’t have time to go into why this actually happens, so here is a brief explanation:

Since the Windows OS takes a one-size-fits-all approach to all environments, the OS is not aware of file sizes. What that means is the OS does not look for the proper size allocation within the logical disk when writing or extending a file. It simply looks for the next available allocation. If the available address is not large enough, the OS splits the file and looks for the next available address, fills, and splits again until the whole file is written. The resulting problem in a SAN environment with flash or disk is that a dedicated I/O operation is required to process every piece of the file. In George’s example, it could take 25 I/O operations to process a file that could have otherwise been processed with a single I/O. We see customer examples of severe fragmentation where a single file has been fractured into thousands of pieces at the logical layer. It’s akin to pouring molasses on a SAN system.

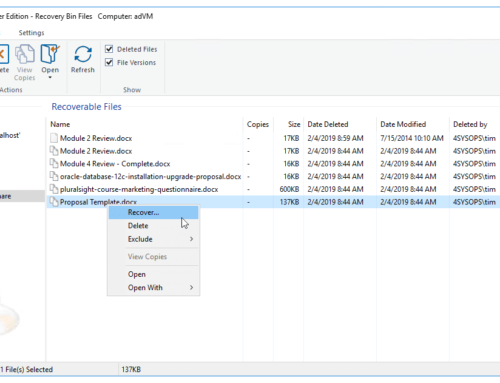

Since a defragmentation process only deals with the problem after-the-fact and is not an option on a modern, production SAN without taking it offline, Condusiv developed its patented IntelliWrite® technology within both Diskeeper® and V-locity® that prevents I/Os from fracturing in the first place. IntelliWrite provides intelligence to the Windows OS to help it find the proper size allocation within the logical disk instead of the next available allocation. This enables files to be written (and read) in a more contiguous and sequential manner, so only minimum I/O is required of any workload from server to storage. This increases throughput on existing systems so organizations can get peak performance from the SSDs or mechanical disks they already have, and avoid overspending on expensive hardware to combat performance problems that can be so easily solved.