By 2020, an estimated 43 trillion gigabytes of data will have been created—300 times the amount of data in existence fifteen years earlier. The benefits of big data, in virtually every field of endeavor, are enormous. We know more, and in many ways can do more, than ever before. But what of the challenges posed by this “data tsunami”? Will the sheer ability to manage—or even to physically house—all this information become a problem?

Condusiv CEO Jim D’Arezzo, in a recent discussion with Supply Chain Brain, commented that “As it has over the past 40 years, technology will become faster, cheaper, and more expansive; we’ll be able to store all the data we create. The challenge, however, is not just housing the data, but moving and processing it. The components are storage, computing, and network. All three need to be optimized; I don’t see any looming insurmountable problems, but there will be some bumps along the road.”

One example is healthcare. Speaking with Healthcare IT News, D’Arezzo noted that there are many new solutions open to healthcare providers today. “But with all the progress,” he said, “come IT issues. Improvements in medical imaging, for instance, create massive amounts of data; as the quantity of available data balloons, so does the need for processing capability.”

Giving health-care providers—and professionals in other areas—the benefits of the data they collect is not always easy. In an interview with Transforming Data with Intelligence, D’Arezzo said, “Data center consolidation and updating is a challenge. We run into cases where organizations do consolidation on a ‘forklift’ basis, simply dumping new storage and hardware into the system as a solution. Shortly thereafter, they often discover that performance has degraded. A bottleneck has been created that needs to be handled with optimization.”

The news is all over it. You are experiencing it. Big data. Big problems. At Condusiv®, we get it. We’ve seen users of our I/O reduction software solutions increase the capability of their storage and servers, including SQL servers, by 30% to 50% or more. In some cases, we’ve seen results as high as 10X initial performance—without the need to purchase a single box of new hardware. The tsunami of data—we’ve got you covered.

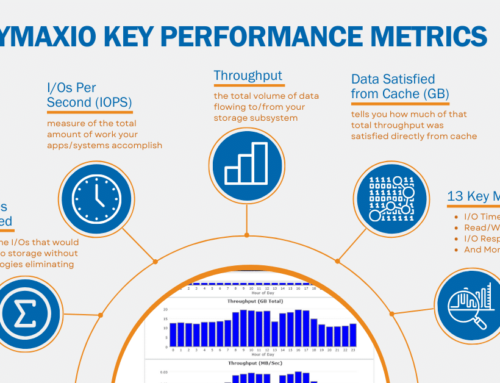

If you’re interested in reducing the two biggest silent killers of your SQL performance, download a free 30-day trial of DymaxIO fast data software and boost your I/O performance now.

If you want to hear why your heaviest workloads are only processing half the throughput they should from VM to storage, view this short video.

Leave A Comment

You must be logged in to post a comment.