If you haven’t already heard the pre-announcement buzz on V-locity® 6.0 I/O reduction software that made a splash in the press, it’s being released in a couple weeks. To understand why it’s significant and why it’s an unprecedented 3X FASTER than its predecessor is to understand the biggest factor that dampens application performance the most in virtual environments – the problem of increasingly smaller, fractured, and random I/O. That kind of I/O profile is akin to pouring molasses on compute and storage systems. Processing I/O with those characteristics makes systems work much harder than necessary to process any given workload. Virtualized organizations stymied by sluggish performance related to their most I/O intensive applications suffer in large part to a problem that we call “death by a thousand cuts” – I/O that is smaller, more fractured, and more random than it needs to be.

Organizations tend to overlook solving the problem and reactively attempt to mask the problem with more spindles or flash or a forklift storage upgrade. Unfortunately, this approach wastes much of any new investment in flash since optimal performance is being robbed by I/O inefficiencies at the Windows OS layer and also at the hypervisor layer.

V-locity® version 6 has been built from the ground-up to help organizations solve their toughest application performance challenges without new hardware. This is accomplished by optimizing the I/O profile for greater throughput while also targeting the smallest, random I/O that is cached from available DRAM to reduce latency and rid the infrastructure of the kind of I/O that penalizes performance the most.

Although much is made about V-locity’s patented IntelliWrite® engine that increases I/O density and sequentializes writes, special attention was put into V-locity’s DRAM read caching engine (IntelliMemory®) that is now 3X more efficient in version 6 due to changes in the behavioral analytics engine that focuses on “caching effectiveness” instead of “cache hits.”

Leveraging available server-side DRAM for caching is very different than leveraging a dedicated flash resource for cache whether that be PCI-e or SSD. Although DRAM isn’t capacity intensive, it is exponentially faster than a PCI-e or SSD cache sitting below it, which makes it the ideal tier for the first caching tier in the infrastructure. The trick is in knowing how to best use a capacity-limited but blazing fast storage medium.

Commodity algorithms that simply look at characteristics like access frequency might work for capacity intensive caches, but it doesn’t work for DRAM. V-locity 6.0 determines the best use of DRAM for caching purposes by collecting data on a wide range of data points (storage access, frequency, I/O priority, process priority, types of I/O, nature of I/O (sequential or random), time between I/Os) – then leverages its analytics engine to identify which storage blocks will benefit the most from caching, which also reduces “cache churn” and the repeated recycling of cache blocks. By prioritizing the smallest, random I/O to be served from DRAM, V-locity eliminates the most performance robbing I/O from traversing the infrastructure. Administrators don’t need to be concerned about carving out precious DRAM for caching purposes as V-locity dynamically leverages available DRAM. With a mere 4GB of RAM per VM, we’ve seen gains from 50% to well over 600%, depending on the I/O profile.

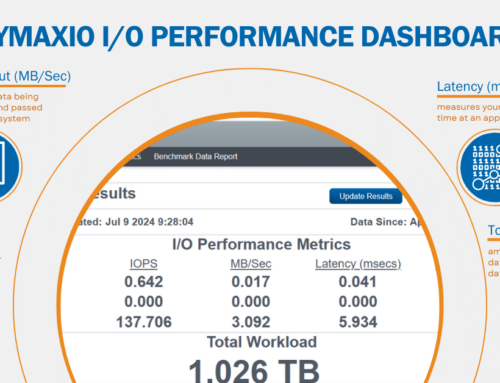

With V-locity 5, we examined data from 2576 systems that tested V-locity and shared their before/after data with Condusiv servers. From that raw data, we verified that 43% of all systems experienced greater than 50% reduction in latency on reads due to IntelliMemory. While that’s a significant number in its own right by simply using available DRAM, we can’t wait to see how that number jumps significantly for our customers with V-locity 6.

Internal Iometer tests reveal that the latest version of IntelliMemory in V-locity 6.0 is 3.6X faster when processing 4K blocks and 2.0X faster when processing 64K blocks.

Jim Miller, Senior Analyst, Enterprise Management Associates had this to say, “V-locity version 6.0 makes a very compelling argument for server-side DRAM caching by targeting small, random I/O – the culprit that dampens performance the most. This approach helps organizations improve business productivity by better utilizing the available DRAM they already have. However, considering the price evolution of DRAM, its speed, and proximity to the processor, some organizations may want to add additional memory for caching if they have data sets hungry for otherworldly performance gains.”

Finally, one of our customers, Rich Reitenauer, Manager of Infrastructure Management and Support, Alvernia University, had this to say, “Typical IT administrators respond to application performance issues by reactively throwing more expensive server and storage hardware at them, without understanding what the real problem is. Higher education budgets can’t afford that kind of brute-force approach. By trying V-locity I/O reduction software first, we were able to double the performance of our LMS app sitting on SQL, stop all complaints about performance, stop the application from timing out on students, and avoid an expensive forklift hardware upgrade.”

Leave A Comment

You must be logged in to post a comment.