After having chatted with 50+ customers the last three months, I’ve heard the same five questions enough times to turn it into a blog entry, and a lot of it has to do with flash:

1. Do Condusiv products still “defrag” like in the old days of Diskeeper?

No. Although users can use Diskeeper to manually defrag if they so choose, the core engines in Diskeeper and V-locity have nothing to do with defragmentation or physical disk management. The patented IntelliWrite® engine inside Diskeeper and V-locity adds a layer of intelligence into the Windows operating system enabling it improve the sequential nature of I/O traffic with large contiguous writes and subsequent reads, which improves performance benefit to both SSDs and HDDs. Since I/O is being streamlined at the point of origin, fragmentation is proactively eliminated from ever becoming an issue in the first place. Although SSDs should never be “defragged,” fragmentation prevention has enormous benefits. This means processing a single I/O to read or write a 64KB file instead of needing several I/O. This alleviates IOPS inflation of workloads to SSDs and cuts down on the number of erase cycles required to write any given file, improving write performance and extending flash reliability.

2. Why is it more important to solve Windows write inefficiencies in virtual environments regardless of flash or spindles on the backend?

Windows write inefficiencies are a problem in physical environments but an even bigger problem in virtual environments due to the fact that multiple instances of the OS are sitting on the same host, creating a bottleneck or choke point that all I/O must funnel through. It’s bad enough if one virtual server is being taxed by Windows write inefficiencies and sending down twice as many I/O requests as it should to process any given workload…now amplify that same problem happening across all the VMs on the same host and there ends up being a tsunami of unnecessary I/O overwhelming the host and underlying storage subsystem. The performance penalty of all of this unnecessary I/O ends up getting further exacerbated by the “I/O Blender” that mixes and randomizes the I/O streams from all the VMs at the point of the hypervisor before sending out to storage a very random pattern, the exact type of pattern that chokes flash performance the most – random writes. V-locity’s IntelliWrite® engine writes files in a contiguous manner which significantly reduces the amount of I/O required to write/read any given file. In addition, IntelliMemory® caches reads from available DRAM. With both engines reducing I/O to storage, that means the usual requirement from storage to process 1GB via 80K I/O drops to 60K I/O at a minimum, but often down to 50K I/O or 40K I/O. This is why the typical V-locity customer sees anywhere from 50-100% more throughput regardless of flash or spindles on the backend because all the optimization is occurring where I/O originates.

VMware’s own “vSphere Monitoring and Performance Guide” calls for “defragmentation of the file system on all guests” as its top performance best practice tip behind adding more memory. When it comes to V-locity, nothing ever has to be “defragged” since fragmentation is proactively eliminated from ever becoming a problem in the first place.

3. How Does V-locity help with flash storage?

One of the most common misnomers is that V-locity is the perfect complement to spindles, but not for flash. That misnomer couldn’t be further from the truth. The fact is, most V-locity customers run V-locity on top of a hybrid (flash & spindles) array or all-flash array. And this is because without V-locity, the underlying storage subsystem has to process at least 35% more I/O than necessary to process any given workload.

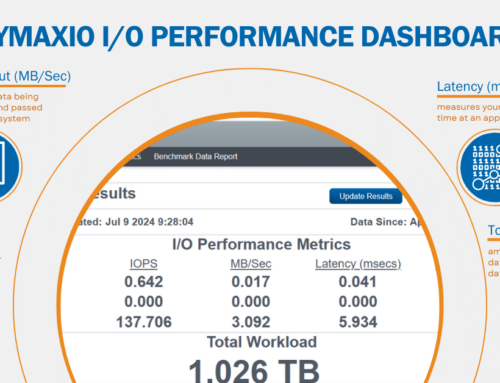

As much as virtualization has been great for server efficiency, the one downside is the complexity introduced to the data path, resulting in I/O characteristics that are much smaller, more fractured, and more random than it needs to be. This means flash storage systems are processing workloads 30-50% slower than they should because performance is suffering death-by-a-thousand cuts from all this small, tiny, random I/O that inflates IOPS and chews up throughput. V-locity streamlines I/O to be much more efficient, so twice as much data can be carried with each I/O operation. This significantly improves flash write performance and extends flash reliability with reduced erase cycles. In addition, V-locity establishes a tier-0 caching strategy using idle, available DRAM to cache reads. As little as 3GB of available memory drives an average of 40% reduction in response time (see source). By optimizing writes and reads, that means V-locity drives down the amount of I/O required to process any given workload. Instead of needing 80K I/O to process a GB of data, users typically only need 50K I/O or sometimes even less.

For more on how V-locity complements hybrid storage or all-flash storage, listen to the following OnDemand Webinar I did with a flash storage vendor (Nimble) and a mutual customer who uses hybrid storage + V-locity for a best-of-breed approach for I/O performance.

4. Is V-locity’s DRAM caching engine starving my applications of precious memory by caching?

No. V-locity dynamically uses what Windows sees as available and throttles back if an application requires more memory, ensuring there is never an issue of resource contention or memory starvation. V-locity even keeps a buffer so there is never a latency issue in serving back memory. ESG Labs examined the last 3,500 VMs that tested V-locity and noted a 40% average reduction in response time (see source). This technology has been battle-tested over 5 years across millions of licenses with some of largest OEMs in the industry.

5. What is the difference between V-locity and Diskeeper?

Diskeeper is for physical servers while V-locity is for virtual servers. Diskeeper is priced per OS instance while V-locity is now priced per host, meaning V-locity can be installed on any number of virtual servers on that host. Diskeeper Professional is for physical clients. The main feature difference is whereas Diskeeper keeps physical servers or clients running like new, V-locity accelerates applications by 50-300%. While both Diskeeper and V-locity solve Windows write inefficiencies at the point of origin where I/O is created, V-locity goes a step beyond by caching reads via idle, available DRAM for 50-300% faster application performance. Diskeeper customers who have virtualized can opt to convert their Diskeeper licenses to V-locity licenses to drive value to their virtualized infrastructure.

Stay tuned on the next major release of Diskeeper coming soon that may inherit similar functionality from V-locity.

Leave A Comment

You must be logged in to post a comment.